|

Digital Privacy: Problem, Solutions, and Implications

The Current Picture: The internet's impact on society has been immeasurable. Search engines, social networks, and the infinite amount of information these contain have transformed how we receive and process data. Our Facebook friend list, our Google search history, our Amazon purchases, and the reviews we've left on all of these platforms... they all come down to data. Everything comes down to data. Data grows. Today, there's data for every person — for every purpose. Data that's power. Paradoxically, this data explosion has left most people powerless, as the digital age has stripped most people of their privacy. For renowned computer scientist Andreas Weigend, privacy is dead. To Weigend, "the time has come to recognize that privacy is now only an illusion" (Weigend 47). He might be right. The current digital privacy model — The Notice and Choice model — is not built around protecting user privacy. Instead, it serves as a capitalistic market-place in which users relinquish part of their privacy in exchange for a service. As the name suggests, the Notice and Choice model involves notifying users about the web site's privacy policies and allowing the users to decide whether they engage with the site or not (Athey et al. 1). Theoretically, it makes perfect sense; in practice, it fails — miserably. In an article published under the law firm Cozen O'Connor, Harvard law school graduate Brian Kint explains how this Notice and Choice model is more of a Take it or Leave it: "When faced with the choice of access or no access, users will choose access, no matter how draconian an organization's information sharing practices may be" (Kint). Susan Athey, professor of economics at Stanford University, along with her colleagues Christian Catalini and Catherine Tucker (MIT professors in technological innovation and management, respectively), explored how the Notice and Choice model falls short in regards to the complexity of human behavior. Their research paper, "The Digital Privacy Paradox: Small Money, Small Costs, Small Talk", studies the results of MIT's digital currency experiment, in which undergraduate students were asked about their digital privacy concerns and given $100 worth of Bitcoin. The students were then presented with a series of online privacy decisions, which were incentivized at different levels. Through the examination of this data, the researchers studied how users' privacy choices can be affected by incentives, reaching three main findings:

While Athey and her colleagues specifically explore why the model is too shallow to cope with the complexities of human behavior, other sources point out how Big-Tech is not interested in solving the problem. In their book Blown to Bits, Hal Abelson, Harry Lewis, and Ken Ledeen claim that "corporations, and other authorities are taking advantage of the chaos" (Abelson et al. 4). Derek Banta explains that the root of the problem lies in the fact that there is not one entity whose sole objective is to protect user privacy: "Promises are really hard to keep when you're trying to ride two horses. Take your credit card company as an example. They do things to protect your privacy, but at the same time, they have a data monetization strategy built right into their business model. Why is that? Because the internet and e-commerce are built on the value of private information. This is what creates the dilemma and, ultimately, the vulnerability" — the privacy vulnerability (Banta 3:58 — 4:24). On top of this, Brian Kint explains "privacy notices are [...] written from the perspective of protecting the organization from legal liability rather than from the perspective of genuinely and clearly informing users as to how their personal information might be shared" (Kint). With the system broken, Big-Data companies are intensively data-mining their users. In the article titled "The WIRED Guide to Your Personal Data (and Who Is Using It)", journalist Louise Matsakis comprehensively goes over the extent to which Big-Data companies are harvesting user data. As expected, "social media posts, location data, and search-engine queries" are all getting tracked through digital tools, like cookies, pixels, and tags. However, it can get a lot more invasive as some companies may track how you interact with a website/app — where you click, tap, zoom... (Matsakis). In their book Born Digital, Harvard law school professors John Palfrey and Urs Gasser go over what this level of tracking entails for "Digital Natives" — people born after 1980 who've grown up surrounded by technology: "By the time a Digital Native enters the workforce, there are hundreds — if not thousands — of digital files about her, held in different hands, each including a series of data points that relate to her and her activities. There is no way for a Digital Native to know that each of these files exists. [...] It would be impossible for her to stay on top of managing the files or even sorting out their sources and contents. And she wouldn't be able to correct the information even when it turned out to be inaccurate" (Palfrey and Gasser). Data Breaches, the Lack of Consent, and the Lack of Informed Consent: Perhaps what's more worrying is that all of this data leaks (Abelson et al. 3). In the last couple of years, Uber, Equifax, Sony, and Yahoo have made major headlines after failing to protect their users' data — and there's no real reason for optimism (Armerding). Just in 2018, Quora, Under Armour, Marriot, Google, amongst many others, faced major data breaches (Leskin). Most notably, the Cambridge Analytica Data Scandal took place. The far-right wing political consulting group exploited "a loophole in Facebook's API that allowed third-party developers to collect data not only from users of their apps but from all of the people in those users' friends network" (Romano). Specifically, Cambridge Analytica simply surveyed 270,000 Facebook users through a 3rd party app; in doing so, they not only gathered the information of the 270,000 users who had agreed to the app's privacy terms but also that of their friends... amounting to a data pool of 87 million users (Chang). Cambridge Analytica then analyzed these users' likes, grouping them into different psychological categories, which it then used to launch targeted political advertisements (Hannes Grassegger & Mikael Krogerus). While evaluating the impact Cambridge Analytica has had on world politics could easily be the subject of an entire research paper (the firm was involved in Trump and Brexit campaigns), the key takeaway from this case study is that neither Notice or Choice was given to most of the 87 million users. For this Mark Zuckerberg apologized on a CNN interview: "This was a major breach of trust and I'm really sorry that this happened" (Zuckerberg). Notice that not notifying users that their data was being shared was a mistake in terms of Facebook's policy, but it is actually common practice for most in the digital industry. In fact, in his New York Times article titled "This Article is Spying on You", Carnegie Mellon computer science professor Timothy Libert explains that "only 10 percent of these outside parties [that mine your information] are disclosed in privacy policies of the news sites we studied, meaning even diligent readers will never learn who collects their data" (Libert). In other words, the Notice and Choice model is not applied across digital platforms, and even when it is, it omits critical information about the true scope of data collection. For the sake of the current model, however, let us assume that the Notice and Choice model is applied consistently and that it reports 100% of the data-mining third parties. Even if this is the case, and the user consents, the model falls short due to the lack of informed consent. As discussed previously, the model fails to provide a framework in which users can become informed participants of the digital community. Zuckerberg himself recognized this in April of 2018, when he testified for the Senate of Commerce and the Senate of Judiciary committees to inform their investigation on "Facebook, Social Media Privacy, and the Use and Abuse of Data": Specifically, when senator Lindsey Graham asked, "do you think the average consumer understands what they're signing up for?", Zuckerberg responded: "I don't think that the average person likely reads that whole document" (Committee on the Judiciary). That's partly on the user for not reading the document, but it's also on Facebook for taking their users' consent as good despite knowing it is uninformed. In this regard, perhaps some sort of government intervention is needed to hold firms like Facebook accountable, or at least make the people who accept Facebook's terms fully aware of what that entails for their privacy. Historically, there are cases in which government intervention was needed to address the lack of informed consent. Take the tobacco industry. In the mid-1960s more than 40% of the US adult population smoked tobacco (American Lung Association). However, throughout the 20th century and particularly since the 1950s, medical research had been consistently finding that tobacco smoke was harmful to human health (Proctor). The 1964 Surgeon's General report officialized these findings, leading to a series of government regulations aimed at informing the smokers of the adverse health effects that this activity entailed: by 1966 the Cigarette and Labelling and Advertising Act of 1965 came into effect and required a vague health warning to accompany each pack; by 1970 the Public Health and Cigarette Smoking Act made this labeling stronger; by 1984 the Comprehensive Smoking Education Act required tobacco packages and advertisement to rotate between four affirmative warnings; by 2009 former President Barack Obama signed the Family Smoking and Prevention Tobacco Control Act, which gave the FDA the power to regulate the industry, and led to the current push for graphic warnings (Center for Disease Control and Prevention). All in all, these measures helped decrease smoking from more than 40% in 1965 to 14% in 2017 (American Lung Association). It is important to note that for the US government, consent proved to be insufficient. It wasn't a matter of consent — of deciding to smoke — but of informed consent — of deciding to smoke knowing about the adverse health consequences. The same argument is valid to support regulation within the digital privacy realm. For a user to truly have digital privacy it's not only about consent — about agreeing to the site's terms — but also about informed consent — about agreeing to the site's terms knowing about the privacy implications. The Push for Regulation: Warren vs Big-Tech: Elizabeth Warren is one of the few politicians aware that there is a systematic problem and bold enough to point it out. The rising Democratic candidate for the US 2020 presidential election has made it clear that if she wins, she'll make sure to regulate the Big-Tech industry. In a Medium article titled "Here's how we can break up Big-Tech," Warren explains her plan to break up data-mining giants such as Amazon, Google, and Facebook; according to her, these companies have "too much power — too much power over our economy, our society, and our democracy" (Warren). That's a claim that is hard to challenge. More specifically, Warren gives three reasons to sustain her proposal to Break Up Big Tech: Number one, that these Big-Tech firms are engaging in anti-competitive behavior; Number two, that Big-Tech firms should not be capable of undermining the USA's electoral security; Number three, that Big-Tech firms are exploiting users' privacy. Warren has been very active on Twitter advocating for her solution. In fact, to this date, Warren has tweeted more than 100 times about how breaking-up big tech would improve the industry's practices. This has not gone unnoticed by major Big-Tech players. At an internal Facebook meeting in July — which's audio was leaked to The Verge by one of the employees who assisted — Zuckerberg criticized Elizabeth Warren for thinking that "the right answer is to break-up the companies" (Zuckerberg / The Verge). Zuckerberg justified his position by recognizing that while they "care about [their] country and want to work with [their] government [...] if someone is going to challenge something that existential you go to that mat, and you fight" (Zuckerberg / The Verge). Major Big-Tech player Bill Gates, who is also the world's most generous philanthropist, agrees with Zuckerberg in that breaking up Big-Tech is not the answer. In an interview with Bloomberg, the Microsoft founder explained that for him Warren's proposal is overly simplistic: "If there is a way a company is behaving that you want to get rid off, then you should just say 'okay that's a banned behavior'. Splitting the company in two and having two people doing the bad thing doesn't seem like a solution" (Gates). Analyzing Warren's proposal, it's evident that she aims at providing a simple solution to a very complicated problem — which makes succeeding much harder. Warren makes distinctions between all the Big-Tech companies to present the many problems within the industry, but then reduces all of these problems to a single solution. For example, Warren has lumped Apple — a product selling company that does not capitalize on their users' data — with data selling companies Google and Facebook, and both product selling and data selling company Amazon. It does not add up. What also doesn't add up is the fact the for some arbitrary reason, the split-up will only target firms with annual revenues of more than $25 billion... but size in itself is not a crime — behavior is. Moreover, many of the behaviors Warren is so blatantly calling out are not specific to Big-Tech. Warren's rationale for splitting Apple is based on their ability to discriminate against third-party developers in favor of their own apps. In an interview with CNBC, Tim Cook disagrees with her by explaining the basis of any market: "You know [...] if you own a shop on the corner, you decide what goes in your store” (15:10 — 15:30). Certainly, there is a political component to Warren's proposal. Perhaps sparking this debate has been part of Warren's strategy to gain voters' preference. After all, before she is able to implement anything she has to win the electoral race. It might be too harsh to compare Trump's Build the Wall proposal with Warren's Break-Up Big-Tech proposal, but there are certain similarities. Number one, both capitalize on people's fear. For Trump, it's been the fear of immigration; for Warren, it's been the fear of surveillance. Number two, they've both directed these fears to a single entity. For Trump, the scapegoat are the immigrants; For Warren, the scapegoat are the Big-Tech companies. Of course, there's a huge moral difference between singling out marginalized immigrants with little political representation and singling out multibillion-dollar businesses that spend millions of dollars in political lobbies. However, the political strategy is the same. To a certain extent, it's populism. Why has Warren called out Facebook and Amazon much more than Google or Apple? It's not about revenue or data collection capabilities, but political impact. The Cambridge Analytica breach deteriorated Facebook's reputation, and ever since Jeff Bezos became the richest person in the world there's been certain resentment towards Amazon — and Warren is capitalizing. In reality, Big-Tech firms are not evil. In fact, they're doing lots of good: Facebook is connecting people in unimaginable ways, Amazon is providing a market-place for entrepreneurs to thrive, Google provides tools that are accessible to all, and Apple has saved lives by alerting their Apple Watch users if the watch senses signs of Afib, amongst others. There's still the problem of digital privacy, but solving that problem by splitting the firms and hoping that would somehow fix the industry seems reckless. Perhaps it's too unfair to judge the content of a proposal as if it were a finalized bill. Warren has been successful in getting a nation-wide conversation started, making people aware of the degree of power that these Big-Tech companies have, and at least attempting to address an evident issue. Digital Privacy as an Economic Problem: In this sense, California is far ahead than the rest of the United States. After the Cambridge Analytica scandal, the state took steps to protect user privacy, passing the California Consumer Privacy Act of 2018 (CCPA). The CCPA will take into effect in 2020, and aims at providing a safe web-surfing environment. To accomplish this, the legislation grants four new laws to Californian users:

However, in doing so, it wrecks the business model of data-mining companies. Why? Because why would any person want their data to be used and sold if it's not necessary? Everyone is going to opt-out. That's like going over to store and having the option to either pay or not pay... but either way, you get the product. It makes no economic sense and is unsustainable. Regulators, however, want to take it even further. California Governor Gavin Newsome has proposed the idea of a 'data dividend' aimed at rebalancing the power structure between Big-Tech companies and their users. According to the governor, “California’s consumers should also be able to share in the wealth that is created from their data” (38:50 — 39:53). Nevertheless, users do reap the benefits of their data: users have access to online services, information, and networks for free — at least in terms of money. Up to now, data has been the way users have been paying for these 'free' services, and all of these regulations simply put at risk the free model. As Dan Rua explains, the only reason why most sites are free is "because of advertisements working" (Rua qtd. in Vice). If Big-Data companies' ability to collect and sell information is restrained, these companies will simply need to find another way to make a profit. As such, there would be a systematic switch to "paid alternatives such as the Freemium model, the Fee-for-service model or the Subscription model" which would, in turn, worsen the digital divide and further inequality (Sanchez and Viejo 2). As expected, this is something most users don't even want. In the aforementioned study "Small Money, Small Costs, Small Talk", professors Athey, Catalini, and Tucker found that "when expressing a preference for privacy is essentially costless as it is in surveys, consumers are eager to express such a preference, but when faced with small costs this taste for privacy quickly dissipates" (Athey et al). Caleb Fuller examines this dissonance from a purely economic lense and goes even further by claiming that digital privacy paradox may not even exist: "it is possible to explain the so-called "privacy paradox" by showing that individuals express greater demands for digital privacy when they are not forced to consider the opportunity cost of that choice" (Fuller 28). Examined economically, for most people privacy is simply a higher quality good — they see value in it, but are not willing to pay for it. Fuller concludes that "consumers prefer exchanging information to exchanging money" (Fuller 28). Does this signify a market failure? Fuller believes not. The economics professor identifies the three sources where digital privacy market failure could potentially arise — asymmetric information between businesses and users, users' behavioral biases, and data resale externalities — and rejects these. Regarding asymmetric information, Fuller claims that in every complex good market no one is perfectly informed. Regarding behavioral biases, Fuller explains that users are not biased but simply reacting to constraints (users prefer exchanging data than paying for the service). Regarding data reselling externalities, he explains that these are priced in at the moment of the initial transaction. All in all, Fuller concludes that evidence for market failure lacks and that, as a result, the push for regulation should be reconsidered. Fuller, however, is too absolute in his analysis. While he identified a key issue regarding asymmetric information in the market — that “respondents clearly are far less well-informed about how Google uses their data than that personal information is collected” — Fuller overlooked its potential implications. When he later claims that the data reselling externalities are priced in at the moment the transaction is made, that opposes his own finding that individuals are uninformed about how their data is used. In other words, if users don’t know how their data is used, how could they possibly price data reselling externalities the moment they engage in the transaction? Moreover, even if users are well-informed, they might then fall to behavioral biases, as they might irrationally believe that data reselling is not going to affect them specifically. Put more succinctly, optimism bias. Again, going back to Tobacco, this is similar to the fact that amongst smokers, x% think that they’ll end up developing smoke related medical health problems, while xx% end up doing so (). More economically, asymmetric information and behavioral biases regarding data reselling create the market failure. Yet even if market failure does not apply, it is hard to compare personal data to other complex goods, as privacy is considered a fundamental human right. The notion of selling a human right is — to say the least — problematic. Nevertheless, Fuller’s economic analysis is still an incredible contribution to the conversation as it sheds light on how we should go about solving the issue: It’s all about changing demand... about changing consumer preferences. If we increase the value users put on their privacy, users will start demanding privacy preserving options and be more willing to pay for these. In doing so, the companies many politicians have blatantly called out for simply reacting to consumer demand will adjust. As such, perhaps we should not start with regulation but with education. First Education. Then Regulation…? Major Big-Tech companies recent policy changes in regards to political advertisement is proof of how consumer preferences can influence how these businesses operate. As such, on October 30 2019, Twitter’s CEO, Jack Dorsey, announced that Twitter would be banning political advertisements. While the policy has been amended to allow for activism, social stewardship, and civic engagement, put simply, Twitter’s new policy bans all political advertisements — and they’re already enforcing it. The rationale behind this radical but responsible change lies in their belief that “political message reach should be earned, not bought” (). Less than a month after Twitter’s announcement, Google hopped on through an official blog post in which they communicated that starting January 2020 they’ll be enforcing new world-wide regulations on political advertisements. While Google will not ban these, they’ll be limiting the targeting capabilities of advertisers: psychographically to “contextual targeting” (interests), and demographically to “age, gender, and general location (postal code level)” (). Moreover, in the same post, Google reiterated its commitment towards debunking Fake News, and creating a transparent online ecosystem. While it’s true that Facebook has not followed suit, they have explained how they’re getting ready for the 2020 US presidential elections. Their strategy involves increasing transparency through a “seven year library”, “cracking down on fake accounts”, “bringing in fact-checkers”, and “investing heavily in AI to take down harmful content” (). They’re still taking advertisement dollars and controversially excepting politicians from their 3rd party fact checkers — but they’re still taking some steps to address the issue. While Twitter, Google, and Facebook are targeting the issue to a lesser or greater extent, they have one thing in common: they’re all targeting the issue and letting the public know they’re doing so. Why? Because their users are starting to demand so. These policy changes can be ultimately traced back to Russia’s illegal meddling of the 2016 US presidential election. Russian interference involved hacking key people in the Clinton’s campaign, intruding into voters databases, and most notably using social networks (Facebook, Twitter, and Google+, amongst many others) to discourage African Americans from voting, encouraging conservatives to vote, and creating troll accounts to systematically criticize Hillary Clinton and support Donald Trump. All in all, the overall strategy was to harm the likes of the democratic candidate from winning the presidential race, as Russian president Vladimir Putin thought Clinton’s electoral win would be detrimental to Russia (). The scandal came to light on September 2016, and led to an FBI investigation which Trump unsuccessfully attempted to dismantle by terminating FBI director James Comey (). After Comey’s discharge, Democrats pushed for Special Counsel investigation, which they ended up getting: Former FBI agent Robert Mueller continued the work of Comey, conducting a two year investigation (). On April of 2019, the report was published with an ambivalent conclusion that reached no final verdict: “while this report does not conclude that the President committed a crime, it also does not exonerate him” (). Despite this anticlimactic outcome, the scandal captured the national conversation for more than two years, and by the end of the investigation the public was aware of the vulnerabilities of the US’s electoral safety and the role that social networks could potentially play on an election (). Social media networks were called out both by the media and the general public, and since then many of the involved tech businesses have publicly apologized or recognized their mistakes: Tumblr has published a “public record of usernames linked to state sponsored disinformation campaigns” (), Twitter’s former CEO and co-founder Evan Williams apologized “for making Trump’s presidency possible” while on Twitter’s board (), and Mark Zuckerberg apologized for both the Cambridge Analytica breach and the Russian scandal in the aforementioned CNN interview (). Without the public pressure and the intense media coverage, these firms would have probably avoided recognizing their mistakes. Linking back to the economic discussion, without the rise of informed users challenging the companies practices, these companies would have avoided losing political advertisement dollars. As such, educating the users proves vital in ensuring a functional system. But just education will probably be insufficient. Recall the comparison made about how tobacco regulation throughout the 20th century sheds light on why regulation is appropriate in the digital privacy realm? How regulation was used to not only ensure consent but also informed consent? In this sense, the solution wasn’t only about education or regulation, but both: it was about guaranteeing education through regulation. On top of this, it’s important to note that the tobacco industry regulation has not been just about informed consent, but also about a direct dissuasion of smoking through excise taxes: since 1864 tobacco has been subject to a federal tax; by 1921 Iowa was the first state to pass an excise tax; “by 1969 all 50 states had followed suit” (). More importantly these taxes have progressively increased: in 1993, not a single state had an excise tax greater than a dollar (), while today 36 of the 50 states do, and 19 of those have an excise tax greater than two dollars ().

0 Comments

Digital Privacy: Problem, Solutions, and Implications

Research Arc 1: What's the current picture? Guiding questions:

The internet's impact on society has been immeasurable. Search engines, social networks, and the infinite amount of information these contain have transformed how we receive and process data. Our Facebook friend list, our Google search history, our Amazon purchases, and the reviews we've left on all of these platforms... they all come down to data. Everything comes down to data. Data grows. Today, there's data for every person — for every purpose. Data that's power. Paradoxically, this data explosion has left most people powerless, as the digital age has stripped most people of their privacy. For renowned computer scientist Andreas Weigend, privacy is dead. To Weigend, "the time has come to recognize that privacy is now only an illusion" (Weigend 47). He might be right. The current digital privacy model — The Notice and Choice model — is not built around protecting user privacy. Instead, it serves as a capitalistic market-place in which users relinquish part of their privacy in exchange for a service. As the name suggests, the Notice and Choice model involves notifying users about the web site's privacy policies and allowing the users to decide whether they engage with the site or not (Athey et al. 1). Theoretically, it makes perfect sense; in practice, it fails — miserably. In an article published under the law firm Cozen O'Connor, Harvard law school graduate Brian Kint explains how this Notice and Choice model is more of a Take it or Leave it: "When faced with the choice of access or no access, users will choose access, no matter how draconian an organization's information sharing practices may be" (Kint). Susan Athey, professor of economics at Stanford University, along with her colleagues Christian Catalini and Catherine Tucker (MIT professors in technological innovation and management, respectively), explored how the Notice and Choice model falls short to the complexity of human behavior. Their research paper, "The Digital Privacy Paradox: Small Money, Small Costs, Small Talk", studies the results of MIT's digital currency experiment, in which undergraduate students were asked about their digital privacy concerns and given $100 worth of Bitcoin. The students were then presented with a series of online privacy decisions, which were incentivized at different levels. Through the examination of this data, the researchers studied how users' privacy choices can be affected by incentives, reaching three main findings:

While Athey and her colleagues specifically explore why the model is too shallow to cope with the complexities of human behavior, other sources point out how Big-Tech is not interested in solving the problem. In their book Blown to Bits, Hal Abelson, Harry Lewis, and Ken Ledeen claim that "corporations, and other authorities are taking advantage of the chaos" (Abelson et al. 4). Derek Banta explains that the root of the problem lies in the fact that there is not one entity whose sole objective is to protect user privacy: "Promises are really hard to keep when you're trying to ride two horses. Take your credit card company as an example. They do things to protect your privacy, but at the same time, they have a data monetization strategy built right into their business model. Why is that? Because the internet and e-commerce are built on the value of private information. This is what creates the dilemma and, ultimately, the vulnerability" — the privacy vulnerability (Banta 3:58 — 4:24). On top of this, Brian Kint explains "privacy notices are [...] written from the perspective of protecting the organization from legal liability rather than from the perspective of genuinely and clearly informing users as to how their personal information might be shared" (Kint). With the system broken, Big-Data companies are intensively data-mining their users. In the article titled "The WIRED Guide to Your Personal Data (and Who Is Using It)", journalist Louise Matsakis comprehensively goes over the extent to which Big-Data companies are harvesting user data. As expected, "social media posts, location data, and search-engine queries" are all getting tracked through digital tools, like cookies, pixels, and tags. However, it can get a lot more invasive as some companies may track how you interact with a website/app — where you click, tap, zoom... (Matsakis). In their book Born Digital, Harvard law school professors John Palfrey and Urs Gasser go over what this level of tracking entails for "Digital Natives" — people born after 1980 who've grown up surrounded by technology: "By the time a Digital Native enters the workforce, there are hundreds — if not thousands — of digital files about her, held in different hands, each including a series of data points that relate to her and her activities. There is no way for a Digital Native to know that each of these files exists. [...] It would be impossible for her to stay on top of managing the files or even sorting out their sources and contents. And she wouldn't be able to correct the information even when it turned out to be inaccurate" (Palfrey and Gasser). Research Arc 2: Data Breaches, the Lack of Consent, and the Lack of Informed Consent Guiding questions:

Perhaps what's more worrying is that all of this data leaks (Abelson et al. 3). In the last couple of years, Uber, Equifax, Sony, and Yahoo have made major headlines after failing to protect their users' data — and there's no real reason for optimism (Armerding). Just in 2018, Quora, Under Armour, Marriot, Google, amongst many others, faced major data breaches (Leskin). Most notably, the Cambridge Analytica Data Scandal took place. The far-right wing political consulting group exploited "a loophole in Facebook's API that allowed third-party developers to collect data not only from users of their apps but from all of the people in those users' friends network" (Romano). Specifically, Cambridge Analytica simply surveyed 270,000 Facebook users through a 3rd party app; in doing so, they not only gathered the information of the 270,000 users who had agreed to the app's privacy terms but also that of their friends... amounting to a data pool of 87 million users (Chang). Cambridge Analytica then analyzed these users' likes, grouping them into different psychological categories, which it then used to launch targeted political advertisements (Hannes Grassegger & Mikael Krogerus). While evaluating the impact Cambridge Analytica has had on world politics could easily be the subject of an entire research paper (the firm was involved in Trump and Brexit campaigns), the key takeaway from this case study is that neither Notice or Choice was given to most of the 87 million users. For this Mark Zuckerberg apologized on a CNN interview: "This was a major breach of trust and I'm really sorry that this happened" (Zuckerberg). Notice that not notifying users that their data was being shared was a mistake in terms of Facebook's policy, but it is actually common practice for most in the digital industry. In fact, in his New York Times article titled "This Article is Spying on You", Carnegie Mellon computer science professor Timothy Libert explains that "only 10 percent of these outside parties [that mine your information] are disclosed in privacy policies of the news sites we studied, meaning even diligent readers will never learn who collects their data" (Libert). In other words, the Notice and Choice model is not applied across digital platforms, and even when it is, it omits critical information about the true scope of data collection. For the sake of the current model, however, let us assume that the Notice and Choice model is applied consistently and that it reports 100% of the data-mining third parties. Even if this is the case, and the user consents, the model falls short due to the lack of informed consent. As discussed previously, the model fails to provide a framework in which users can become informed participants of the digital community. Zuckerberg himself recognized this in April of 2018, when he testified for the Senate of Commerce and the Senate of Judiciary committees to inform their investigation on "Facebook, Social Media Privacy, and the Use and Abuse of Data": Specifically, when senator Lindsey Graham asked, "do you think the average consumer understands what they're signing up for?", Zuckerberg responded: "I don't think that the average person likely reads that whole document" (Committee on the Judiciary). That's partly on the user for not reading the document, but it's also on Facebook for taking their users' consent as good despite knowing it is uninformed. In this regard, perhaps some sort of government intervention is needed to hold firms like Facebook accountable, or at least make the people who accept Facebook's terms fully aware of what that entails for their privacy. Historically, there are cases in which government intervention was needed to address the lack of informed consent. Take the tobacco industry. In the mid-1960s more than 40% of the US adult population smoked tobacco (American Lung Association). However, throughout the 20th century and particularly since the 1950s, medical research had been consistently finding that tobacco smoke was harmful to human health (Proctor). The 1964 Surgeon's General report officialized these findings, leading to a series of government regulations aimed at informing the smokers of the adverse health effects that this activity entailed: by 1966 the Cigarette and Labelling and Advertising Act of 1965 came into effect and required a vague health warning to accompany each pack; by 1970 the Public Health and Cigarette Smoking Act made this labeling stronger; by 1984 the Comprehensive Smoking Education Act required tobacco packages and advertisement to rotate between four affirmative warnings; by 2009 former President Barack Obama signed the Family Smoking and Prevention Tobacco Control Act, which gave the FDA the power to regulate the industry, and led to the current push for graphic warnings (Center for Disease Control and Prevention). All in all, these measures helped decrease smoking from more than 40% in 1965 to 14% in 2017 (American Lung Association). It is important to note that for the US government, consent proved to be insufficient. It wasn't a matter of consent — of deciding to smoke — but of informed consent — of deciding to smoke knowing about the adverse health consequences. The same argument is valid to support regulation within the digital privacy realm. For a user to truly have digital privacy it's not only about consent — about agreeing to the site's terms — but also about informed consent — about agreeing to the site's terms knowing about the privacy implications. Research Arc 3: The Push for Regulation: Warren vs Big-Tech Guiding Questions:

Elizabeth Warren is one of the few politicians aware that there is a systematic problem and bold enough to point it out. The leading Democratic candidate for the US 2020 presidential election has made it clear that if she wins, she'll make sure to regulate the Big-Tech industry. In a Medium article titled "Here's how we can break up Big-Tech," Warren explains her plan to break up data-mining giants such as Amazon, Google, and Facebook; according to her, these companies have "too much power — too much power over our economy, our society, and our democracy" (Warren). That's a claim that is hard to challenge. More specifically, Warren gives three reasons to sustain her proposal to break up of Big-Tech: Number one, that these Big-Tech firms are engaging in anti-competitive behavior; Number two, that Big-Tech firms should not be capable of undermining the USA's electoral security; Number three, that Big-Tech firms are exploiting users' privacy. Warren has been very active on Twitter advocating for her proposal. In fact, to this date, Warren has tweeted more than 100 times about how breaking-up big tech would improve the industry's practices. This has not gone unnoticed for major Big-Tech players. At an internal Facebook meeting in July — which's audio was leaked to The Verge by one of the employees who assisted — Zuckerberg criticized Elizabeth Warren for thinking that "the right answer is to break-up the companies" (Zuckerberg / The Verge). Zuckerberg justified his position by recognizing that while they "care about [their] country and want to work with [their] government [...] if someone is going to challenge something that existential you go to that mat, and you fight" (Zuckerberg / The Verge). Major Big-Tech player Bill Gates, who is also the world's most generous philanthropist, agrees with Zuckerberg in that breaking up Big-Tech is not the answer. In an interview with Bloomberg, the Microsoft founder explained that for him Warren's proposal is overly simplistic: "If there is a way a company is behaving that you want to get rid off, then you should just say 'okay that's a banned behavior'. Splitting the company in two and having two people doing the bad thing doesn't seem like a solution" (Gates). Analyzing Warren's proposal, it's evident that she aims at providing a simple solution to a very complicated problem — which makes succeeding much harder. Warren makes distinctions between all the Big-Tech companies to present the many problems within the industry, but then reduces all of these problems to a single solution. For example, Warren has lumped Apple — a product selling company that does not capitalize on their users' data — with data selling companies Google and Facebook, and both product selling and data selling company Amazon. It does not add up. What also doesn't add up is the fact the for some arbitrary reason, the split-up will only target firms with annual revenues of more than $25 billion... but size in itself is not a crime — behavior is... Moreover, many of the behaviors Warren is so blatantly calling out are not specific to Big-Tech. Warren's rationale for splitting Apple is based on their ability to discriminate against third-party developers in favor of their own apps. In an interview with CNBC, Tim Cook disagrees with her by explaining the basis of any market: "You know [...] if you own a shop on the corner, you decide what goes in your store” (15:10 — 15:30). Certainly, there is a political component to Warren's proposal. Perhaps sparking this debate has been part of Warren's strategy to gain voters' preference. After all, before she is able to implement anything she has to win the electoral race. It might be too harsh to compare Trump's Build the Wall proposal with Warren's Break-Up Big-Tech proposal, but there are certain similarities. Number one, both capitalize on people's fear. For Trump, it's been the fear of immigration; for Warren, it's been the fear of surveillance. Number two, they've both directed these fears to a single entity. For Trump, the scapegoat are the immigrants; For Warren, the scapegoat are the Big-Tech companies. Of course, there's a huge moral difference between singling out marginalized immigrants with little political representation and singling out multibillion-dollar businesses that spend millions of dollars in political lobbies. However, the political strategy is the same. To a certain extent, it's populism. Why has Warren called out Facebook and Amazon much more than Google or Apple? It's not about revenue or data collection capabilities, but political impact. The Cambridge Analytica breach deteriorated Facebook's reputation, and ever since Jeff Bezos became the richest person in the world there's been certain resentment towards Amazon — and Warren is capitalizing. In reality, Big-Tech firms are not evil. In fact, they're doing lots of good: Facebook is connecting people in unimaginable ways, Amazon is providing a market-place for entrepreneurs to thrive, Google provides tools that are accessible to all, and Apple has saved lives by alerting their Apple Watch users if the watch senses signs of Afib, amongst others. There's still the problem of digital privacy, but solving that problem by splitting the firms and hoping that would somehow fix the industry seems reckless. Perhaps it's too unfair to judge the content of a proposal as if it were a finalized bill. Warren has been successful in getting a nation-wide conversation started, making people aware of the degree of power that these Big-Tech companies have, and at least attempting to address an evident issue. Research Arc 4: Digital Privacy as an Economic Problem Guiding Questions:

However, in doing so, it wrecks the business model of data-mining companies. Why? Because why would any person want their data to be used and sold if it's not necessary? Everyone is going to opt-out. That's like going over to store and having the option to either pay or not pay... but either way, you get the product. It makes no economic sense and is unsustainable. Regulators, however, want to take it even further. California Governor Gavin Newsome has proposed the idea of a 'data dividend' aimed at rebalancing the power structure between Big-Tech companies and their users. According to the governor, “California’s consumers should also be able to share in the wealth that is created from their data” (38:50 — 39:53). Nevertheless, users do reap the benefits of their data: users have access to online services, information, and networks for free — at least in terms of money. Up to now, data has been the way users have been paying for these 'free' services, and all of these regulations simply put at risk the free model. As Dan Rua explains, the only reason why most sites are free is "because of advertisements working" (Rua qtd. in Vice). If Big-Data companies' ability to collect and sell information is restrained, these companies will simply need to find another way to make a profit. As such, there would be a systematic switch to "paid alternatives such as the Freemium model, the Fee-for-service model or the Subscription model" which would, in turn, worsen the digital divide and further inequality (Sanchez and Viejo 2). As expected, this is something most users don't even want. In the aforementioned study "Small Money, Small Costs, Small Talk", professors Athey, Catalini, and Tucker found that "when expressing a preference for privacy is essentially costless as it is in surveys, consumers are eager to express such a preference, but when faced with small costs this taste for privacy quickly dissipates" (Athey et al). Caleb Fuller examines this dissonance from a purely economic lense and goes even further by claiming that digital privacy paradox may not even exist: "it is possible to explain the so-called "privacy paradox" by showing that individuals express greater demands for digital privacy when they are not forced to consider the opportunity cost of that choice" (Fuller 28). Examined economically, for most people privacy is simply a higher quality good — they see value in it, but are not willing to pay for it. Fuller concludes that "consumers prefer exchanging information to exchanging money" (Fuller 28). Does this signify a market failure? Fuller believes not. The economics professor identifies the three sources where digital privacy market failure could potentially arise — asymmetric information between businesses and users, users' behavioral biases, and data resale externalities — and rejects these. Regarding asymmetric information, Fuller claims that in every complex good market no one is perfectly informed. Regarding behavioral biases, Fuller explains that users are not biased but simply reacting to constraints (users prefer exchanging data than paying for the service). Regarding data reselling externalities, he explains that these are priced in at the moment of the initial transaction. All in all, Fuller concludes that evidence for market failure lacks and that, as a result, the push for regulation should be reconsidered. Fuller, however, is too absolute in his analysis. While he identified a key issue regarding asymmetric information in the market — that “respondents clearly are far less well-informed about how Google uses their data than that personal information is collected” — Fuller overlooked its potential implications. When he later claims that the data reselling externalities are priced in at the moment the transaction is made, that opposes his own finding that individuals are uninformed about how their data is used. In other words, if users don’t know how their data is used, how could they possibly price data reselling externalities the moment they engage in the transaction? Moreover, even if users are well-informed, they might then fall to behavioral biases, as they might irrationally believe that data reselling is not going to affect them specifically. Put more succinctly, optimism bias. Again, going back to Tobacco, this is similar to the fact that amongst smokers, x% think that they’ll end up developing smoke related medical health problems, while xx% end up doing so (). In summary and more economical terms, asymmetric information and behavioral biases in regards to data reselling creates the market failure. Yet even if market failure does not apply, it is hard to compare personal data to other complex goods, as privacy is considered a fundamental human right. The notion of selling a human right is — to say the least — problematic. However, Fuller’s economic analysis is still an incredible contribution to the conversation as it sheds light on how we should go about solving the issue: It’s all about changing demand... about changing consumer preferences. If we increase the value users put on their privacy, users will start demanding privacy preserving options and be more willing to pay for these. In doing so, the companies many politicians have blatantly called out for simply reacting to consumer demand will adjust. As such, perhaps we should not start with regulation but education. Planning (Expansions of Already Written Arcs, and Arcs to be Written): Research Arc 1 Expansion:

Research Arc 3 Expansion:

Research Arc 4 Expansion:

Write Research Arc 5:

Sources for Arc 4 Expansion: CCPA & GDPR

https://www.youtube.com/watch?v=z6Kez7o6n0k https://www.youtube.com/watch?v=LU-o9ghQ9S4 https://oag.ca.gov/system/files/attachments/press_releases/CCPA%20Fact%20Sheet%20%2800000002%29.pdf https://www.ana.net/blogs/show/id/rr-blog-2019-01-California-Consumers-Express-Concerns-About-The-California-Consumer-Privacy-Act+ https://www.americanbar.org/groups/business_law/publications/committee_newsletters/bcl/2019/201902/fa_9/ https://www.irmi.com/articles/expert-commentary/a-summary-of-ccpa-of-2018 https://www.helpnetsecurity.com/2019/02/04/gdpr-ccpa-differences/ https://fpf.org/2018/11/28/fpf-and-dataguidance-comparison-guide-gdpr-vs-ccpa/ https://www.bakerlaw.com/webfiles/Privacy/2018/Articles/CCPA-GDPR-Chart.pdf https://fpf.org/wp-content/uploads/2018/11/GDPR_CCPA_Comparison-Guide.pdf https://oag.ca.gov/privacy/ccpa https://oag.ca.gov/sites/all/files/agweb/pdfs/privacy/ccpa-proposed-regs.pdf Plan for this week: Definitely expand arc 4 and, if possible, write arcs 5 and 6. Michele Makhlouf | Blog: https://yesnoperhaps.weebly.com/blog

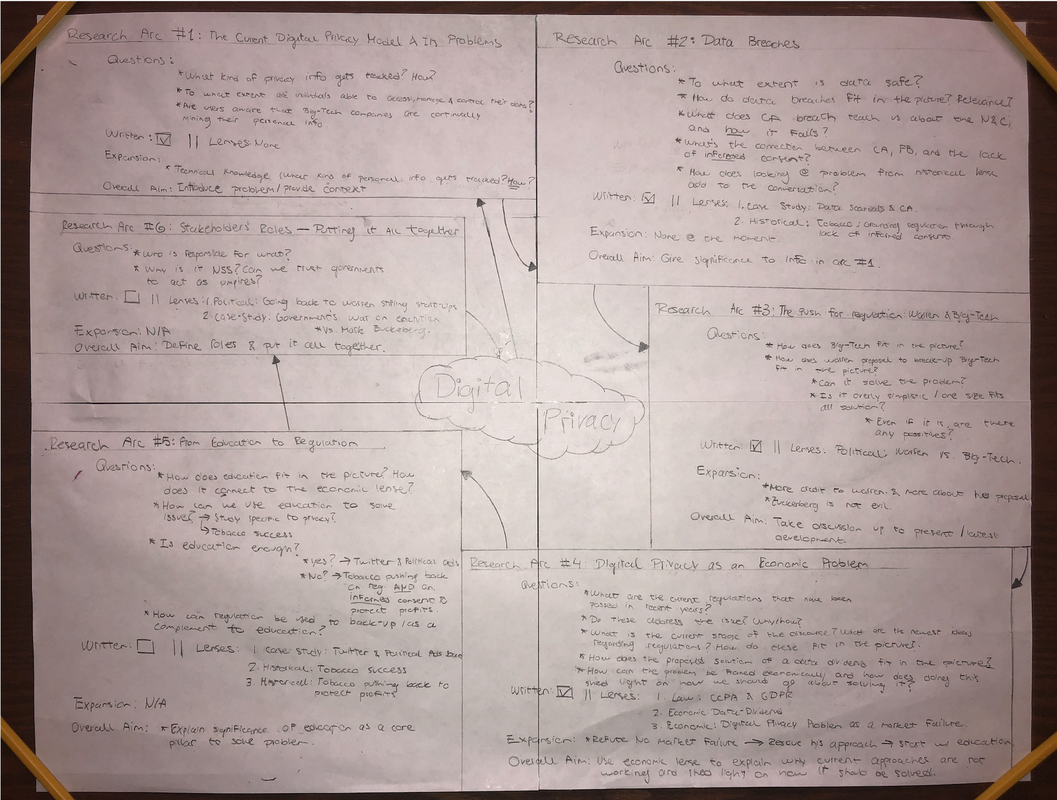

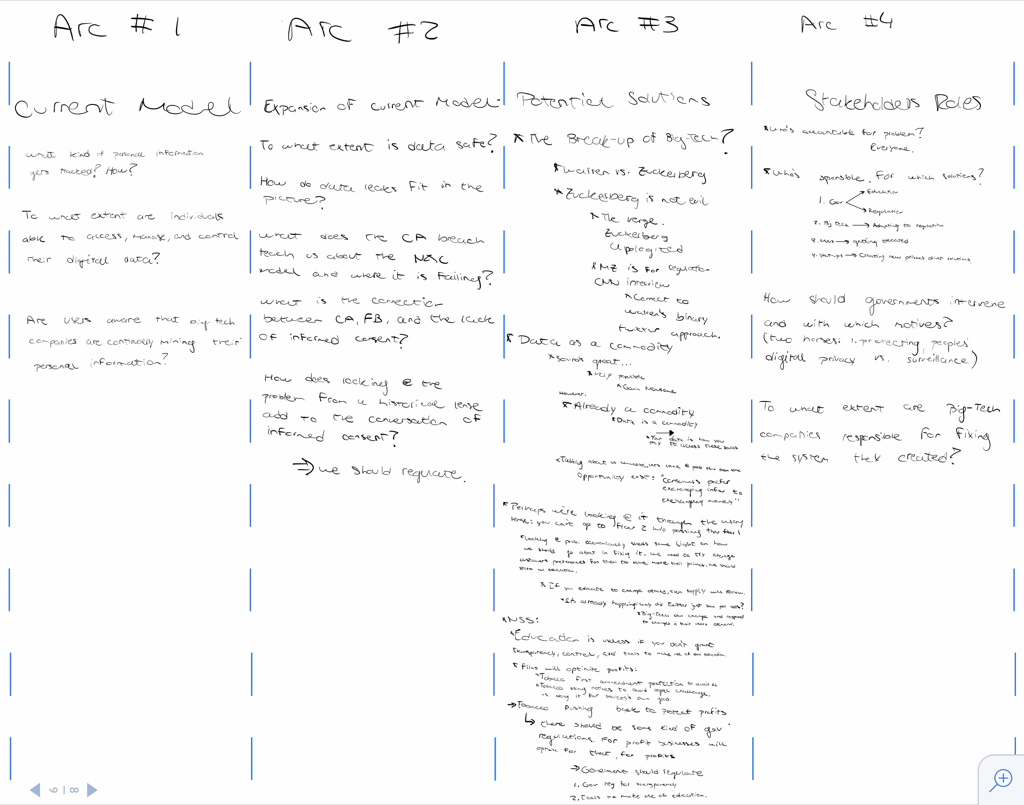

Context and Overall Structure: My project focuses on digital privacy. I go-over the current situation, explain the limitations of the current Notice and Choice model, and critically analyze different solutions to the problem. To ground this research/analysis, I’ve used different lenses (historical, case study, political and economic lenses). Over the past few weeks, I've written out paragraphs for research arcs one, two, three, and four… though some of the ideas expressed in these paragraphs might change. Regarding the work I have ahead of myself, I still have to write research arcs 5 and 6, and also revise all of the previous arcs. One thing to note when reading my project is that I wrote each arc with the previous one in mind so that the transition between them is swift. However, the guiding questions chop them up. As such, you might want to read from arc to arc without reading the guiding questions, referring to them later if needed. The following is my overall structure:

Digital Privacy: Problem, Solutions, and Implications Research Arc 1: What's the current picture? Guiding questions:

The internet's impact on society has been immeasurable. Search engines, social networks, and the infinite amount of information these contain have transformed how we receive and process data. Our Facebook friend list, our Google search history, our Amazon purchases, and the reviews we've left on all of these platforms... they all come down to data. Everything comes down to data. Data grows. Today, there's data for every person — for every purpose. Data that's power. Paradoxically, this data explosion has left most people powerless, as the digital age has stripped most people of their privacy. For renowned computer scientist Andreas Weigend, privacy is dead. To Weigend, "the time has come to recognize that privacy is now only an illusion" (Weigend 47). He might be right. The current digital privacy model — The Notice and Choice model — is not built around protecting user privacy. Instead, it serves as a capitalistic market-place in which users relinquish part of their privacy in exchange for a service. As the name suggests, the Notice and Choice model involves notifying users about the web site's privacy policies and allowing the users to decide whether they engage with the site or not (Athey et al. 1). Theoretically, it makes perfect sense; in practice, it fails — miserably. In an article published under the law firm Cozen O'Connor, Harvard law school graduate Brian Kint explains how this Notice and Choice model is more of a Take it or Leave it: "When faced with the choice of access or no access, users will choose access, no matter how draconian an organization's information sharing practices may be" (Kint). Susan Athey, professor of economics at Stanford University, along with her colleagues Christian Catalini and Catherine Tucker (MIT professors in technological innovation and management, respectively), explored how the Notice and Choice model falls short to the complexity of human behavior. Their research paper, "The Digital Privacy Paradox: Small Money, Small Costs, Small Talk", studies the results of MIT's digital currency experiment, in which undergraduate students were asked about their digital privacy concerns and given $100 worth of Bitcoin. The students were then presented with a series of online privacy decisions, which were incentivized at different levels. Through the examination of this data, the researchers studied how users' privacy choices can be affected by incentives, reaching three main findings:

While Athey and her colleagues specifically explore why the model is too shallow to cope with the complexities of human behavior, other sources point out how Big-Tech is not interested in solving the problem. In their book Blown to Bits, Hal Abelson, Harry Lewis, and Ken Ledeen claim that "corporations, and other authorities are taking advantage of the chaos" (Abelson et al. 4). Derek Banta explains that the root of the problem lies in the fact that there is not one entity whose sole objective is to protect user privacy: "Promises are really hard to keep when you're trying to ride two horses. Take your credit card company as an example. They do things to protect your privacy, but at the same time, they have a data monetization strategy built right into their business model. Why is that? Because the internet and e-commerce are built on the value of private information. This is what creates the dilemma and, ultimately, the vulnerability" — the privacy vulnerability (Banta 3:58 — 4:24). On top of this, Brian Kint explains "privacy notices are [...] written from the perspective of protecting the organization from legal liability rather than from the perspective of genuinely and clearly informing users as to how their personal information might be shared" (Kint). With the system broken, Big-Data companies are intensively data-mining their users. In the article titled "The WIRED Guide to Your Personal Data (and Who Is Using It)", journalist Louise Matsakis comprehensively goes over the extent to which Big-Data companies are harvesting user data. As expected, "social media posts, location data, and search-engine queries" are all getting tracked through digital tools, like cookies, pixels, and tags. However, it can get a lot more invasive as some companies may track how you interact with a website/app — where you click, tap, zoom... (Matsakis). In their book Born Digital, Harvard law school professors John Palfrey and Urs Gasser go over what this level of tracking entails for "Digital Natives" — people born after 1980 who've grown up surrounded by technology: "By the time a Digital Native enters the workforce, there are hundreds — if not thousands — of digital files about her, held in different hands, each including a series of data points that relate to her and her activities. There is no way for a Digital Native to know that each of these files exists. [...] It would be impossible for her to stay on top of managing the files or even sorting out their sources and contents. And she wouldn't be able to correct the information even when it turned out to be inaccurate" (Palfrey and Gasser). Research Arc 2: Data Breaches, the Lack of Consent, and the Lack of Informed Consent Guiding questions:

Perhaps what's more worrying is that all of this data leaks (Abelson et al. 3). In the last couple of years, Uber, Equifax, Sony, and Yahoo have made major headlines after failing to protect their users' data — and there's no real reason for optimism (Armerding). Just in 2018, Quora, Under Armour, Marriot, Google, amongst many others, faced major data breaches (Leskin). Most notably, the Cambridge Analytica Data Scandal took place. The far-right wing political consulting group exploited "a loophole in Facebook's API that allowed third-party developers to collect data not only from users of their apps but from all of the people in those users' friends network" (Romano). Specifically, Cambridge Analytica simply surveyed 270,000 Facebook users through a 3rd party app; in doing so, they not only gathered the information of the 270,000 users who had agreed to the app's privacy terms but also that of their friends... amounting to a data pool of 87 million users (Chang). Cambridge Analytica then analyzed these users' likes, grouping them into different psychological categories, which it then used to launch targetted political advertisements (Hannes Grassegger & Mikael Krogerus). While evaluating the impact Cambridge Analytica has had on world politics could easily be the subject of an entire research paper (the firm was involved in Trump and Brexit campaigns), the key takeaway from this case study is that neither Notice or Choice was given to most of the 87 million users. For this Mark Zuckerberg apologized on a CNN interview: "This was a major breach of trust and I'm really sorry that this happened" (Zuckerberg). Notice that not notifying users that their data was being shared was a mistake in terms of Facebook's policy, but it is actually common practice for most in the digital industry. In fact, in his New York Times article titled "This Article is Spying on You", Carnegie Mellon computer science professor Timothy Libert explains that "only 10 percent of these outside parties [that mine your information] are disclosed in privacy policies of the news sites we studied, meaning even diligent readers will never learn who collects their data" (Libert). In other words, the Notice and Choice model is not applied across digital platforms, and even when it is, it omits critical information about the true scope of data collection. For the sake of the current model, however, let us assume that the Notice and Choice model is applied consistently and that it reports 100% of the data-mining third parties. Even if this is the case, and the user consents, the model falls short due to the lack of informed consent. As discussed previously, the model fails to provide a framework in which users can become informed participants of the digital community. Zuckerberg himself recognized this in April of 2018, when he testified for the Senate of Commerce and the Senate of Judiciary committees to inform their investigation on "Facebook, Social Media Privacy, and the Use and Abuse of Data": Specifically, when senator Lindsey Graham asked, "do you think the average consumer understands what they're signing up for?", Zuckerberg responded: "I don't think that the average person likely reads that whole document" (Committee on the Judiciary). That's partly on the user for not reading the document, but it's also on Facebook for taking their users' consent as good despite knowing it is uninformed. In this regard, perhaps some sort of government intervention is needed to hold firms like Facebook accountable, or at least make the people who accept Facebook's terms fully aware of what that entails for their privacy. Historically, there are cases in which government intervention was needed to address the lack of informed consent. Take the tobacco industry. In the mid-1960s more than 40% of the US adult population smoked tobacco (American Lung Association). However, throughout the 20th century and particularly since the 1950s, medical research had been consistently finding that tobacco smoke was harmful to human health (Proctor). The 1964 Surgeon's General report officialized these findings, leading to a series of government regulations aimed at informing the smokers of the adverse health effects that this activity entailed: by 1966 the Cigarette and Labelling and Advertising Act of 1965 came into effect and required a vague health warning to accompany each pack; by 1970 the Public Health and Cigarette Smoking Act made this labeling stronger; by 1984 the Comprehensive Smoking Education Act required tobacco packages and advertisement to rotate between four affirmative warnings; by 2009 former President Barack Obama signed the Family Smoking and Prevention Tobacco Control Act, which gave the FDA the power to regulate the industry, and led to the current push for graphic warnings (Center for Disease Control and Prevention). All in all, these measures helped decrease smoking from more than 40% in 1965 to 14% in 2017 (American Lung Association). It is important to note that for the US government, consent proved to be insufficient. It wasn't a matter of consent — of deciding to smoke — but of informed consent — of deciding to smoke knowing about the adverse health consequences. The same argument is valid to support regulation within the digital privacy realm. For a user to truly have digital privacy it's not only about consent — about agreeing to the site's terms — but also about informed consent — about agreeing to the site's terms knowing about the privacy implications. Research Arc 3: The Push for Regulation: Warren vs Big-Tech Guiding Questions:

Elizabeth Warren is one of the few politicians aware that there is a systematic problem and bold enough to point it out. The leading Democratic candidate for the US 2020 presidential election has made it clear that if she wins, she'll make sure to regulate the Big-Tech industry. In a Medium article titled "Here's how we can break up Big-Tech," Warren explains her plan to break up data-mining giants such as Amazon, Google, and Facebook; according to her, these companies have "too much power — too much power over our economy, our society, and our democracy" (Warren). That's a claim that is hard to challenge. More specifically, Warren gives three reasons to sustain her proposal to break up of Big-Tech: Number one, that these Big-Tech firms are engaging in anti-competitive behavior; Number two, that Big-Tech firms should not be capable of undermining the USA's electoral security; Number three, that Big-Tech firms are exploiting users' privacy. Warren has been very active on Twitter advocating for her proposal. In fact, to this date, Warren has tweeted more than 100 times about how breaking-up big tech would improve the industry's practices. This has not gone unnoticed for major Big-Tech players. At an internal Facebook meeting in July — which's audio was leaked to The Verge by one of the employees who assisted — Zuckerberg criticized Elizabeth Warren for thinking that "the right answer is to break-up the companies" (Zuckerberg / The Verge). Zuckerberg justified his position by recognizing that while they "care about [their] country and want to work with [their] government [...] if someone is going to challenge something that existential you go to that mat, and you fight" (Zuckerberg / The Verge). Major Big-Tech player Bill Gates, who is also the world's most generous philanthropist, agrees with Zuckerberg in that breaking up Big-Tech is not the answer. In an interview with Bloomberg, the Microsoft founder explained that for him Warren's proposal is overly simplistic: "If there is a way a company is behaving that you want to get rid off, then you should just say 'okay that's a banned behavior'. Splitting the company in two and having two people doing the bad thing doesn't seem like a solution" (Gates). Analyzing Warren's proposal, it's evident that she aims at providing a simple solution to a very complicated problem — which makes succeeding much harder. Warren makes distinctions between all the Big-Tech companies to present the many problems within the industry, but then reduces all of these problems to a single solution. For example, Warren has lumped Apple — a product selling company that does not capitalize on their users' data — with data selling companies Google and Facebook, and both product selling and data selling company Amazon. It does not add up. What also doesn't add up is the fact the for some arbitrary reason, the split-up will only target firms with annual revenues of more than $25 billion... but size in itself is not a crime — behavior is... Moreover, many of the behaviors Warren is so blatantly calling out are not specific to Big-Tech. Warren's basis for splitting Apple is based on their ability to discriminate against third-party developers in favor of their own apps. In an interview with CNBC, Tim Cook disagrees with her by explaining the basis of any market: "You know [...] if you own a shop on the corner, you decide what goes in your store” (15:10 — 15:30). Certainly, there is a political component to Warren's proposal. Perhaps sparking this debate has been part of Warren's strategy to gain voters' preference. After all, before she is able to implement anything she has to win the electoral race. It might be too harsh to compare Trump's Build the Wall proposal with Warren's Break-Up Big-Tech proposal, but there are certain similarities. Number one, both capitalize on people's fear. For Trump, it's been the fear of immigration; for Warren, it's been the fear of surveillance. Number two, they've both directed these fears to a single entity. For Trump, the scapegoat are the immigrants; For Warren, the scapegoat are the Big-Tech companies. Of course, there's a huge moral difference between singling out marginalized immigrants with little political representation and singling out multibillion-dollar businesses that spend millions of dollars in political lobbies. However, the political strategy is the same. To a certain extent, it's populism. Why has Warren called out Facebook and Amazon much more than Google or Apple? It's not about revenue or data collection capabilities, but political impact. The Cambridge Analytica breach deteriorated Facebook's reputation, and ever since Jeff Bezos became the richest person in the world there's been certain resentment towards Amazon. The debate over whether Bezos should have such level wealth is completely secondary — the point is Warren is politically biased. Big-Tech firms are not evil. In fact, they're doing lots of good: Facebook is connecting people in unimaginable ways, Amazon is providing a market-place for entrepreneurs to thrive, Google provides tools that are accessible to all, and Apple has saved lives by alerting their Apple Watch users if the watch senses signs of Afib, amongst others. There's still the problem of digital privacy, but solving that problem by splitting the firms and hoping that would somehow fix the industry seems reckless. Perhaps it's too unfair to judge the content of a proposal as if it were a finalized bill. Warren has been successful in getting a nation-wide conversation started, making people aware of the degree of power that these Big-Tech companies have, and at least attempting to address an evident issue. Research Arc 4: Digital Privacy as an Economic Problem Guiding Questions:

In this sense, California is far ahead than the rest of the United States. After the Cambridge Analytica scandal, the state took steps to protect user privacy, passing the California Consumer Privacy Act of 2018 (CCPA). Similar to the GDPR, CCPA is ... ... In addition, the CCPA will force companies to provide a "clear and conspicuous link on the business’s Internet homepage, titled “Do Not Sell My Personal Information,” [....] that allows the user to "opt-out of the sale" of their data without losing access to the service. In other words, the CCPA brings real actual choice to what today is the 'Take-it or Leave-it'. However, in doing so, it wrecks the business model of data-mining companies. Why? Because why would any user want their data to be used and sold if it's not necessary? Everyone is going to opt-out. That's like going over to store and having the option to either pay or not pay... but either way, you get the product. It makes no economic sense and is unsustainable. Regulators want to take it even further. California Governor Gavin Newsome has proposed the idea of a 'data dividend' aimed at rebalancing the power structure between Big-Tech companies and their users. According to the governor, “California’s consumers should also be able to share in the wealth that is created from their data” (). Nevertheless, users do reap the benefits of their data: users have access to online services, information, and networks for free — at least in terms of money. Up to now, data has been the way users have been paying for these 'free' services, and all of these regulations simply put at risk the free model. As Dan Rua explains, the only reason why most sites are free is "because of advertisements working" (Rua qtd. in Vice). If Big-Data companies' ability to collect and sell information is restrained, these companies will simply need to find another way to make a profit. As such, there would be a systematic switch to "paid alternatives such as the Freemium model, the Fee-for-service model or the Subscription model" which would, in turn, worsen the digital divide and further inequality (Sanchez and Viejo 2). Leaving these issues aside, this is something most users don't even want. In the aforementioned study "Small Money, Small Costs, Small Talk", professors Athey, Catalini, and Tucker found that "when expressing a preference for privacy is essentially costless as it is in surveys, consumers are eager to express such a preference, but when faced with small costs this taste for privacy quickly dissipates" (Athey et al). Caleb Fuller examines this dissonance from a purely economic lense and goes even further by claiming that digital privacy paradox may not even exist: "it is possible to explain the so-called "privacy paradox" by showing that individuals express greater demands for digital privacy when they are not forced to consider the opportunity cost of that choice" (Fuller 28). Examined economically, for most people privacy is simply a higher quality good — they see value in it, but are not willing to pay for it. Fuller concludes that "consumers prefer exchanging information to exchanging money" (Fuller 28). The question is whether this signifies a market failure. Fuller answers this question by assessing digital privacy market failure from three sources: asymmetric information between businesses and users, users' behavioral biases, and data resale externalities. Regarding asymmetric information, Fuller claims that in every complex good market no one is perfectly informed. Regarding behavioral biases, Fuller explains that these can simply be attributed to constraints (users prefer exchanging data than paying or not having access to the service). Regarding data reselling externalities, he explains that these are priced in at the initial transaction. All in all, Fuller concludes that evidence for market failure lacks and that, as a result, the push for regulation should be reconsidered. Planning (Expansions of Already Written Arcs, and Arcs to be Written): Research Arc 1 Expansion:

Research Arc 3 Expansion:

Research Arc 4 Expansion:

Write Research Arc 5:

Last paragraph of research arc 3: Perhaps it's too unfair to judge the content of a proposal as if it were a finalized bill. Warren has been successful in getting a nation-wide conversation started, making people aware of the degree of power that these Big-Tech companies have, and at least attempting to address an evident issue.